Its first formulation, which preceded the proper definition of entropy and was based on caloric theory, is Carnot's theorem, formulated by the French scientist Sadi Carnot, who in 1824 showed that the efficiency of conversion of heat to work in a heat engine has an upper limit. The second law has been expressed in many ways. Statistical mechanics provides a microscopic explanation of the law in terms of probability distributions of the states of large assemblies of atoms or molecules. Historically, the second law was an empirical finding that was accepted as an axiom of thermodynamic theory. An increase in the combined entropy of system and surroundings accounts for the irreversibility of natural processes, often referred to in the concept of the arrow of time. The second law may be formulated by the observation that the entropy of isolated systems left to spontaneous evolution cannot decrease, as they always arrive at a state of thermodynamic equilibrium where the entropy is highest at the given internal energy. It can be used to predict whether processes are forbidden despite obeying the requirement of conservation of energy as expressed in the first law of thermodynamics and provides necessary criteria for spontaneous processes.

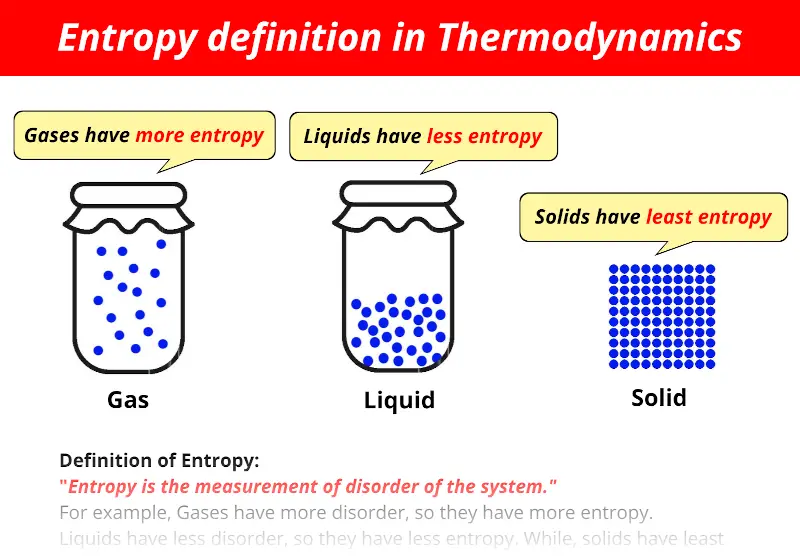

The second law of thermodynamics in other versions establishes the concept of entropy as a physical property of a thermodynamic system. Another definition is: "Not all heat energy can be converted into work in a cyclic process." One simple statement of the law is that heat always moves from hotter objects to colder objects (or "downhill"), unless energy in some form is supplied to reverse the direction of heat flow.

The fact that a perfect crystal of a substance at 0 K has zero entropy is sometimes called the Third Law of Thermodynamics.The second law of thermodynamics is a physical law based on universal experience concerning heat and energy interconversions. This is because we know that the substance has zero entropy as a perfect crystal at 0 K there is no comparable zero for enthalpy. The reason is that the entropies listed are absolute, rather than relative to some arbitrary standard like enthalpy. Note that there are values listed for elements, unlike DH fº values for elements. The Thermodynamics Table lists the entropies of some substances at 25 ✬. Continue this process until you reach the temperature for which you want to know the entropy of a substance (25 ✬ is a common temperature for reporting the entropy of a substance). Then you can use equation (1) to calculate the entropy changes. Even though equation (1) only works when the temperature is constant, it is approximately correct when the temperature change is small. Now start introducing small amounts of heat and measuring the temperature change. Since there is no disorder in this state, the entropy can be defined as zero. Imagine cooling the substance to absolute zero and forming a perfect crystal (no holes, all the atoms in their exact place in the crystal lattice). The absolute entropy of any substance can be calculated using equation (1) in the following way. At absolute 0 (0 K), all atomic motion ceases and the disorder in a substance is zero. On this scale, zero is the theoretically lowest possible temperature that any substance can reach. The temperature in this equation must be measured on the absolute, or Kelvin temperature scale.

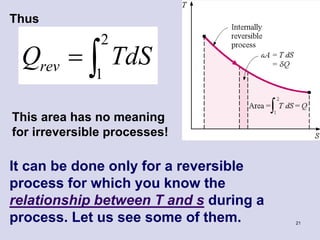

Using this equation it is possible to measure entropy changes using a calorimeter. Where S represents entropy, DS represents the change in entropy, q represents heat transfer, and T is the temperature. One useful way of measuring entropy is by the following equation:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed